An Agent-Driven "Microkernel" Operating System for Backend Engineering

Engineering Manual · Quick Start

"Learning Agent architecture is like re-learning Operating Systems. History doesn't repeat, but it rhymes!"

This repository is a machine-to-machine (M2M) infrastructure. It is a Cognitive Harness—an executable protocol designed by humans, but read, interpreted, and executed exclusively by Large Language Models (LLMs).

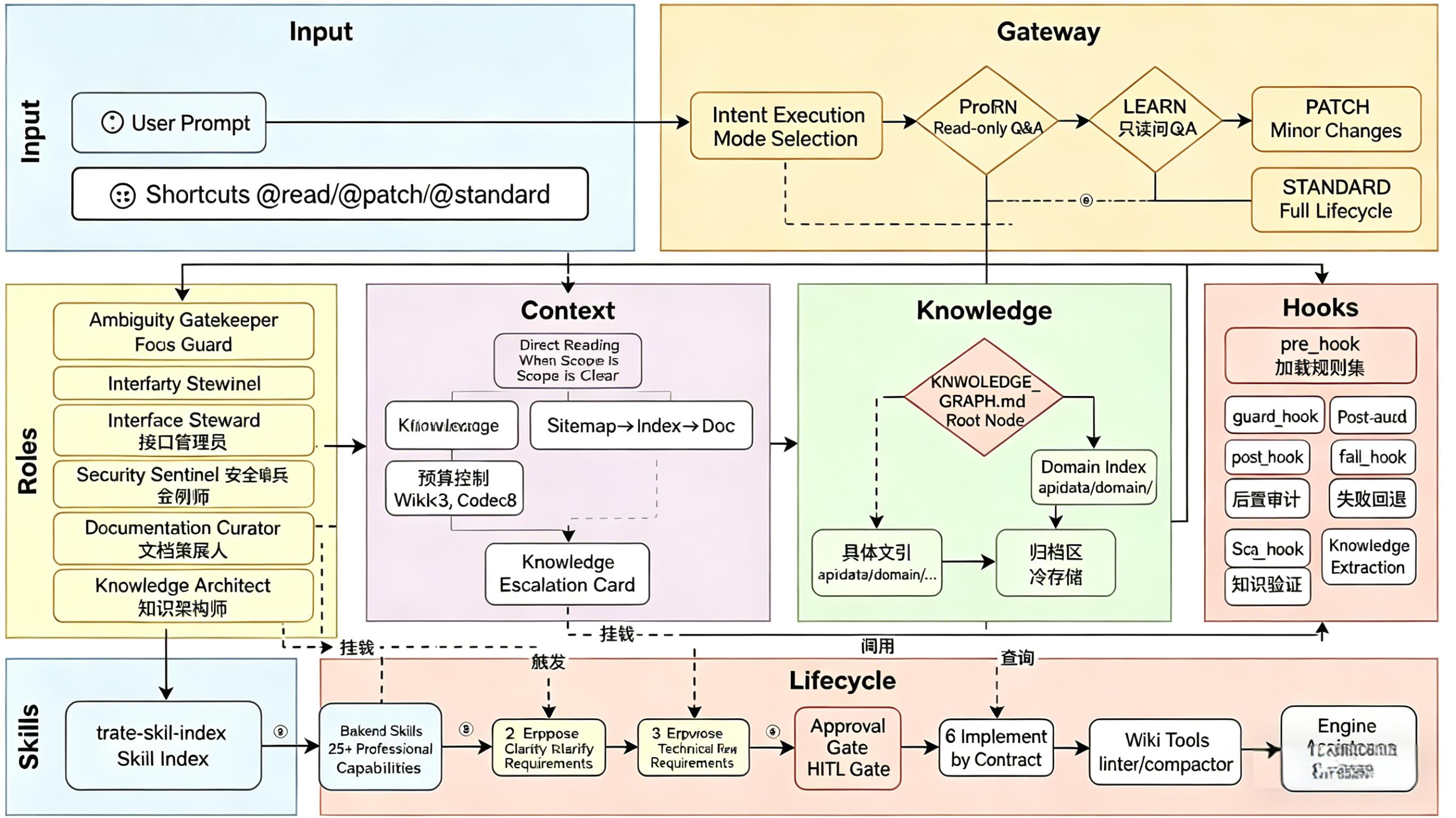

Unlike traditional Agent frameworks that act as bloated "Macro-kernels," Java Harness Agent adopts an extremely restrained Microkernel OS Philosophy:

- Process = Intent Boundary: Cross-intent requires explicit communication (WAL Write-back).

- RAM = Context Window: Strictly scheduled by the architecture.

- System Calls = Tool Use: Traps into the kernel via system calls, authenticated by the Role Matrix.

- File System = RAG & Wiki: Mounted on demand, burned after use.

Java Harness Agent is an agent-driven backend engineering workflow designed for sustainable software evolution. It deeply integrates Cognitive Philosophy (counter-intuitive bias checks, first-principles thinking) and pioneers a Dual-Track Flow with a 4-Level Risk Matrix. Driven by a rich ecosystem of high-density Master Skills, it completely eliminates "runaway code" and "architecture rot" common in traditional Agent development.

Java Harness Agent fuses the "Contract-First" OpenSpec design philosophy with a Microkernel architecture. Through its Intent Gateway, Dual-Track Lifecycle, Vector-less Knowledge Graph (LLM Wiki), and Cognitive Brakes, it achieves a sustainable, interruptible, and self-correcting engineering closed-loop.

- 🎯 OS-Level Intent Driven: Natural language → Structured intent queues → Process-level task scheduling

- 🧠 Cognitive Philosophy: Built-in cognitive bias correction and 5-Whys decision frameworks, forcing the Agent to "think thrice" (Cognitive Brake) before acting.

- 🛤️ Dual-Track & 4-Level Risk Matrix: Differentiates between TRIVIAL (Fast-path), LOW (PATCH track), and MEDIUM/HIGH (STANDARD full 6-phase), abandoning one-size-fits-all cumbersome processes.

- 📚 Microkernel Knowledge Graph: Completely discards the "black box" of vector databases, utilizing a pure Markdown hierarchical mounting system to ensure 100% context determinism.

- 🛡️ Self-Correcting & Gating: Automatic guard hooks, failure recovery, and mandatory human-in-the-loop checkpoints (Approval Gate).

- 🔌 Rich Skill Ecosystem: Organized across 6 categories (Defaults, Role-Required, Business, Engineering Pipeline, Java Standards, QA & Debugging, Workflow, Meta), covering every lifecycle phase.

Given that Java Harness Agent is a strongly-constrained framework, its architecture shifts costs from "Trial & Error / Blind Search" to "Upfront Planning & Gating Defenses", resulting in highly predictable and stable overall costs for complex tasks.

- Each turn requires the LLM to output the

<Cognitive_Brake>and read mandatory system contexts. This adds a fixed baseline "thinking tax" of ~500 Output Tokens and ~2000 Input Tokens per interaction. - With the integration of the Cognitive Framework, the Agent must first self-reflect (anti-bias check), adding a few hundred tokens upfront but saving tens of thousands of tokens otherwise wasted on architectural rewrites.

| Paradigm | Behavior | Input Tokens | Output Tokens | Hidden Costs / Risks | Verdict |

|---|---|---|---|---|---|

| Pure Chat / Copilot | Jumps straight to coding with limited context. | ~5k | ~1k | High Rework Rate. Misses transaction boundaries, forgets existing enums. Requires human prompt corrections. | Cheap in Tokens, Expensive in Human Time. |

| Macro-kernel Auto-Agent | Blindly searches, loads all skills at once, loops endlessly on compile errors. | 100k+ | 10k+ | Disastrous. Burns through budget via massive context bloat and infinite loops. | Unpredictable & Dangerous. |

| Microkernel Harness Agent | Pays the "Thinking Tax", utilizes Dual-Track and funnel throttling, STOPs at high-risk gates. | ~30k | ~6k | Highly predictable. Architectural errors intercepted early; syntax errors digested by Shift-Left Validation. | The Sweet Spot. Optimized for high-quality delivery with controlled spend. |

Three Fundamental Problems Solved:

- Context Bloat (OOM): LLM blind searching wastes tokens → Solved via pure-text mounted File System and "burn-after-reading".

- Requirement Drift (Privilege Escalation): Agent free-play corrupts contracts → Solved via Microkernel Intent Gateway + strict Role Matrix guards.

- Knowledge Fragmentation (Memory Leaks): Conversation memory loss → Solved via WAL (Write-Ahead Logging) write-backs and agile Garbage Collection (GC).

The Agent is not an isolated "full-stack LLM," but a hardcore virtual team of 13 heroes with vastly different personalities. The LLM must dynamically mount these roles and use their exclusive weapons (Python Gate Scripts) to defend system discipline.

- @Requirement Engineer: "Do not send me garbage words like 'optimize'. Give me boundaries, or stay quiet!" (Weapon:

ambiguity_gate.py) - @Ambiguity Gatekeeper: "Wait, you want to global grep? Draw the

focus_card.mdred lines first!" (Weapon:focus_card.mdRune)

- @System Architect: "The blast radius is calculated. Build according to my

openspec.mdblueprint!" (Weapon:Approval GateSummoning Circle) - @Devil's Advocate: "Oh Architect, do you really think this logic survives high concurrency deadlocks?" (Weapon:

cognitive-bias-checklist)

- @Lead Engineer: "The contract is the law. I only implement

openspec.md." (Weapon:javacFurnace of Truth) - @Focus Guard: "Your hands reach too far! Pull back inside the Focus Card ward!" (Weapon:

scope_guard.pyRuler of Discipline) - @Code Reviewer: "Magic Numbers? N+1 query risks? Rewrite this filthy code!" (Weapon:

Static LinterLight of Purification)

- @Knowledge Extractor (Silent Historian): "Empires fall, but History (WAL) is eternal." (Weapon:

writeback_gate.pyJudgment of History) - @Documentation Curator (Friend of Humanity): "Show humans some care. Comments must explain Why, not What." (Weapon:

README & Javadoc) - @Skill Graph Curator (OCD Librarian): "Once the index is messed up, the whole world loses its way." (Weapon:

skill_index_linter.py)

- @Librarian (Midnight Scavenger): "Shh... Do not wake me unless you bring the

@gccommand to merge fragments." (Weapon:librarian_gc.py) - @Knowledge Architect (Urban Planner): "This document exceeds 400 lines! LLMs will suffer OOM reading this! Split it!" (Weapon: Structural Reorganization)

graph TD

subgraph Input["End-User Input"]

User["User Request"]

Shortcut["Fast Syscall: read/patch/standard"]

end

subgraph Kernel["Kernel Router - Gateway"]

IG["Intent Gateway: Parser and Anti-Bias"]

Risk{"4-Level Risk Matrix"}

TRIVIAL["TRIVIAL: Fast Path"]

LOW["LOW: PATCH Track"]

MEDHIGH["MEDIUM / HIGH: STANDARD Track"]

end

subgraph Context["Virtual Memory - Context"]

DirectRead["Register Read: Explicit Scope"]

Funnel["Page Table Funnel: Sitemap to Index"]

Budget["OOM Killer: Wiki<=3, Code<=8"]

end

subgraph Knowledge["File System - RAG/Disk"]

KG["KNOWLEDGE_GRAPH.md: Mount Root"]

DomainIndex["Partitions: api / data / domain"]

Archive["Cold Backup: Archive"]

end

subgraph Lifecycle["Process Scheduler"]

LaunchSpec["PCB: Launch Spec"]

Phase1["1_Explorer: Clarify and Decompose"]

Phase2["2_Propose: Freeze Contract"]

Phase3["3_Review: Cognitive Critique"]

ApprovalGate["Kernel Switch: Approval Gate"]

Phase4["4_Implement: Atomic Execution"]

Phase5["5_QA: 3D Testing"]

Phase6["6_Archive: WAL Write-back and GC"]

end

subgraph Roles["Privilege Rings - Ring 0/3"]

SysArchitect["System Architect: Arch Auth"]

FocusGuard["Focus Guard: Segfault Guard"]

DocCurator["Doc Curator: FS Write Auth"]

end

Input --> IG

IG --> Risk

Risk -->|TRIVIAL| TRIVIAL

Risk -->|LOW| LOW

Risk -->|MEDIUM/HIGH| MEDHIGH

TRIVIAL --> DirectRead

LOW --> LaunchSpec

MEDHIGH --> LaunchSpec

DirectRead --> Funnel

Funnel --> Budget

LaunchSpec --> Phase1

Phase1 --> Phase2

Phase2 --> Phase3

Phase3 --> ApprovalGate

ApprovalGate --> Phase4

Phase4 --> Phase5

Phase5 --> Phase6

Phase6 --> LaunchSpec

No more one-size-fits-all red tape. The framework assesses tasks at the Kernel entry point (Router) and routes them to different tracks:

| Risk Level | Characteristics | Authorization | Testing | Rollback Cost |

|---|---|---|---|---|

| TRIVIAL | Queries, logging, typos, reading | Auto-Approve | Optional | Zero |

| LOW | Single-method bugfix, internal refactor (no API/DB change) | Implicit (PATCH) | Unit Tests | Very Low |

| MEDIUM | New APIs, DB column additions, cross-module calls | Explicit (Approval Gate) | Integration | High |

| HIGH | Core flow modifications, state machine changes | Explicit + Arch Review | Full Regression | Disastrous |

The Fast Path. Zero bureaucracy.

- Skips the lengthy

ProposeandReviewphases. - No heavy

openspec.mdgenerated; uses a lightweightfocus_card.md. - Straight to implementation and testing.

- Extremely low token cost, ideal for high-frequency, small iterations.

Heavy Armor. Defending the engineering baseline.

- Strictly follows the full 6-phase lifecycle (Explorer → Propose → Review → Implement → QA → Archive).

- Mandates the generation of

openspec.mdand triggers the Approval Gate (Human-in-the-loop) before writing any code. - Injects cognitive critique to interrogate the architectural design.

The skill ecosystem is organized across multiple categories, each mounted by lifecycle phase and role requirements. Skills are stored under .agents/skills/ with trae-skill-index serving as the global routing table.

| Skill | Phase | Primary Role |

|---|---|---|

brainstorming |

Explorer / Propose | Requirement Engineer |

task-decomposition-guide |

Propose / Review | System Architect |

writing-plans |

Propose / Implement | System Architect / Lead Engineer |

systematic-debugging |

Implement / QA | Lead Engineer / Code Reviewer |

test-driven-development |

Implement | Lead Engineer |

verify |

QA / Archive | Code Reviewer / Knowledge Extractor |

code-review-checklist |

QA | Code Reviewer |

wal-documentation-rules |

Archive | Knowledge Extractor |

skill-graph-manager |

Any (skills change) | Skill Graph Curator |

java-architecture-standards |

Propose / Implement | System Architect / Lead Engineer |

java-coding-style |

Implement | Lead Engineer |

java-testing-standards |

QA | Code Reviewer |

mybatis-sql-standard |

Propose / Implement | System Architect / Lead Engineer |

| Skill | Required By |

|---|---|

cognitive-bias-checklist |

Requirement Engineer, System Architect, Devil's Advocate |

spec-quality-checklist |

Requirement Engineer, System Architect, Documentation Curator |

decision-frameworks |

System Architect, Devil's Advocate, Ambiguity Gatekeeper |

linter-severity-standard |

Code Reviewer |

ai-pipeline, blueprint, architecture-decision-records, eval-harness, external-research, self-improve, ai-slop-cleaner

dispatching-parallel-agents, using-git-worktrees, release, deepinit, remember

Start with AGENTS.md - the master entry point defining execution discipline and OS mounting rules.

- OOM Killer: Wiki ≤ 3 docs, Code ≤ 8 files (Trigger Escalation if exceeded).

- Cognitive Brake: Mandatory XML block before any action to enforce Process, Scope, Budget, and bias reflection.

Use explicit commands to force the framework into a specific track:

@read / @learn → Enter Read-Only Process (TRIVIAL, no side effects)

@patch / @quickfix → Mount PATCH Track (LOW, lightweight fix)

@standard → Mount STANDARD Track (MEDIUM/HIGH, full heavy lifecycle)

Example:

@learn --scope src/foo/bar.ts -- explain this file

@patch --risk low --test "mvn test" -- fix NPE in createOrder

@standard --risk high -- implement tenant permission checks for order list API

- Launch Spec is persisted at

router/runs/launch_spec_*.md(Acts as the PCB - Process Control Block). - First action after session interruption: read this file to restore state.

- If stuck at

WAITING_APPROVAL, the Agent will wait for you to reviewopenspec.mdand say "Approved" before switching to kernel mode to write code.

| Mechanism | Trigger Point | Effect | OS Metaphor |

|---|---|---|---|

| Cognitive_Brake | Before any action | Forces LLM reasoning (roles, boundaries, bias reflection) | Kernel Privilege Check |

| pre_hook | Before new phase | Load rules + output preflight | Context Switch |

| guard_hook | During code edit | Blocks style/auth violations immediately; runs secrets_linter.py |

Memory Segfault Guard |

| Approval Gate | After Review | Freezes contract, waits for human | User-to-Kernel Switch |

| shift_left_hook | After code written | Forces autonomous compile check (javac / mvn compile); max 2 retries |

Build Sanity Check |

| Archive Write-back | Task end | Appends stable specs to Wiki Index (WAL) | fsync (Dirty Page Write) |

- 📘 Engineering Manual (Chinese): ENGINEERING_MANUAL_zh.md

- 📘 Engineering Manual (English): ENGINEERING_MANUAL.md

- 🇨🇳 Chinese README: README_zh.md

- 📌 Project Rules: AGENTS.md - Master rule entry & constitution

- 🗺️ Knowledge Graph: .agents/llm_wiki/KNOWLEDGE_GRAPH.md - Virtual FS Root

Welcome to co-build this pure M2M engineering infrastructure!

- Read First: Deeply understand the Microkernel philosophy in ENGINEERING_MANUAL.md.

- Follow Lifecycle: Architectural changes must go through the

STANDARDtrack. - Restraint: We pursue high density and orthogonality in skills. Refuse adding "spaghetti" single-instruction skills.

This project is licensed under the MIT License - see the LICENSE file for details.

Built with ❤️ for sustainable, non-bloating backend development