Solve differential equations with Physics-Informed Neural Networks.

Modular. Training-agnostic. Inverse-problem-first.

Most PINN libraries make you wire up every loss term, collocation grid, and training loop by hand before you see a single result. AnyPINN gives you a working experiment in one command and then lets you peel back every layer when you're ready.

The fastest way to start is the bootstrap CLI. It scaffolds a complete, runnable project interactively. Run it with uvx (ships with uv):

uvx anypinn create my-projector with pipx:

pipx run anypinn create my-projectRun anypinn create --help to see all available flags and templates. For a full walkthrough covering project structure, configuration, training, and next steps, see the Getting Started guide.

AnyPINN is built around progressive complexity. Start simple, go deeper only when you need to.

| User | Goal | How |

|---|---|---|

| Experimenter | Run a known problem, tweak parameters, see results | Pick a built-in template, change config, press start |

| Researcher | Define new physics or custom constraints | Subclass Constraint and Problem, use the provided training engine |

| Framework builder | Custom training loops, novel architectures | Use anypinn.core directly, no Lightning required |

The examples/ directory has ready-made, self-contained scripts covering epidemic models, oscillators, predator-prey dynamics, and more, from a minimal ~80-line core-only script to full Lightning stacks. They're a great source of inspiration when defining your own problem.

If you want to go beyond the built-in templates, here is the full workflow for defining a custom ODE inverse problem.

Implement a function matching the ODECallable protocol:

from torch import Tensor

from anypinn.core import ArgsRegistry

def my_ode(x: Tensor, y: Tensor, args: ArgsRegistry) -> Tensor:

"""Return dy/dx given current state y and position x."""

k = args["k"](x) # learnable or fixed parameter

return -k * y # simple exponential decayfrom dataclasses import dataclass

from anypinn.problems import ODEHyperparameters

@dataclass(frozen=True, kw_only=True)

class MyHyperparameters(ODEHyperparameters):

pde_weight: float = 1.0

ic_weight: float = 10.0

data_weight: float = 5.0from anypinn.problems import ODEInverseProblem, ODEProperties

props = ODEProperties(ode=my_ode, args={"k": param}, y0=y0)

problem = ODEInverseProblem(

ode_props=props,

fields={"u": field},

params={"k": param},

hp=hp,

)import pytorch_lightning as pl

from anypinn.lightning import PINNModule

# With Lightning (batteries included)

module = PINNModule(problem, hp)

trainer = pl.Trainer(max_epochs=50_000)

trainer.fit(module, datamodule=dm)

# Or with your own training loop (core only, no Lightning)

optimizer = torch.optim.Adam(problem.parameters(), lr=1e-3)

for batch in dataloader:

optimizer.zero_grad()

loss = problem.training_loss(batch, log=my_log_fn)

loss.backward()

optimizer.step()AnyPINN is split into four layers with a strict dependency direction: outer layers depend on inner ones, never the reverse.

graph TD

EXP["Your Experiment / Generated Project"]

EXP --> CAT

EXP --> LIT

subgraph CAT["anypinn.catalog"]

direction LR

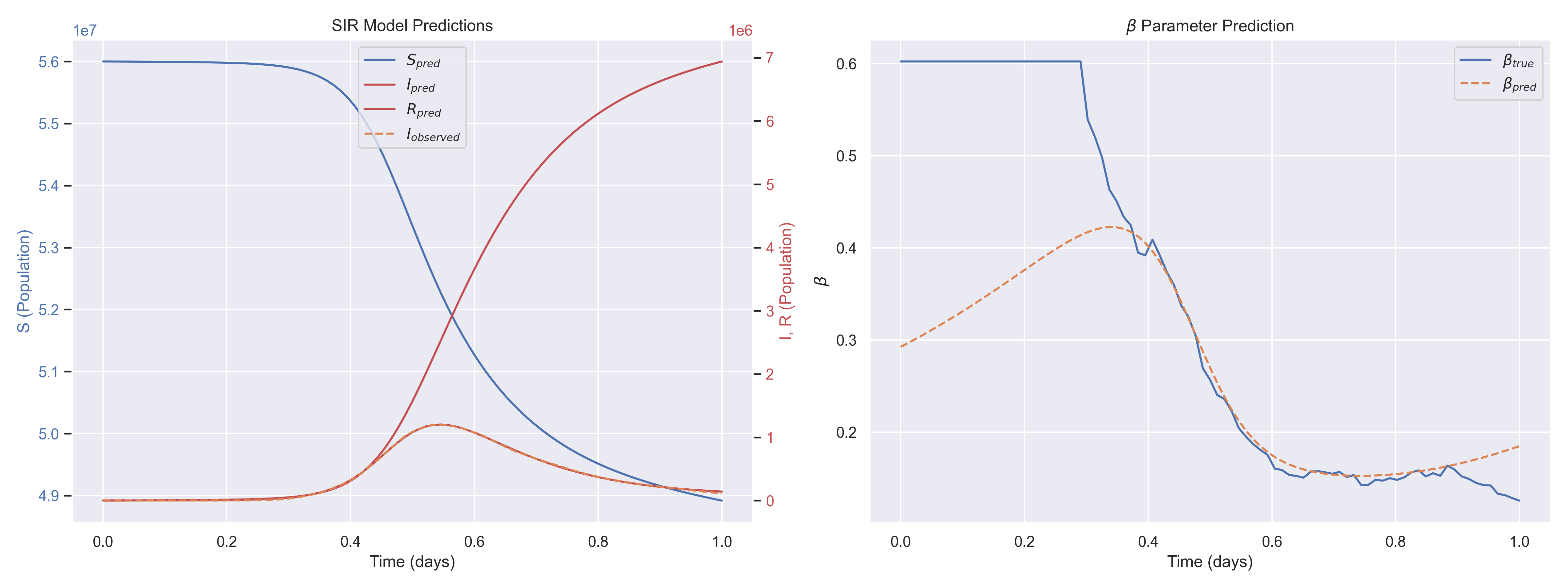

CA1[SIR / SEIR]

CA2[DampedOscillator]

CA3[LotkaVolterra]

end

subgraph LIT["anypinn.lightning (optional)"]

direction LR

L1[PINNModule]

L2[Callbacks]

L3[PINNDataModule]

end

subgraph PROB["anypinn.problems"]

direction LR

P1[ResidualsConstraint]

P2[ICConstraint]

P3[DataConstraint]

P4[ODEInverseProblem]

end

subgraph CORE["anypinn.core (standalone · pure PyTorch)"]

direction LR

C1[Problem · Constraint]

C2[Field · Parameter]

C3[Config · Context]

end

CAT -->|depends on| PROB

CAT -->|depends on| CORE

LIT -->|depends on| CORE

PROB -->|depends on| CORE

Pure PyTorch. Defines what a PINN problem is, with no opinions about training.

Problem: aggregates constraints, fields, and parameters. Providestraining_loss()andpredict().Constraint(ABC): a single loss term. Subclass it to express any physics equation, boundary condition, or data-matching objective.Field: MLP mapping input coordinates to state variables (e.g.,t → [S, I, R]).Parameter: learnable scalar or function-valued parameter (e.g.,βin SIR).InferredContext: runtime domain bounds and validation references, extracted from data and injected into constraints automatically.

A thin wrapper plugging a Problem into PyTorch Lightning:

PINNModule:LightningModulewrapping anyProblem. Handles optimizer setup, context injection, and prediction.PINNDataModule: abstract data module managing loading, config-driven collocation sampling, and context creation. Collocation strategy is selected viaTrainingDataConfig.collocation_sampler("random","uniform","latin_hypercube","log_uniform_1d", or"adaptive").- Callbacks: SMMA-based early stopping, formatted progress bars, data scaling, prediction writers.

Ready-made constraints for ODE inverse problems:

ResidualsConstraint:‖dy/dt − f(t, y)‖²via autogradICConstraint:‖y(t₀) − y₀‖²DataConstraint:‖prediction − observed data‖²ODEInverseProblem: composes all three with configurable weights

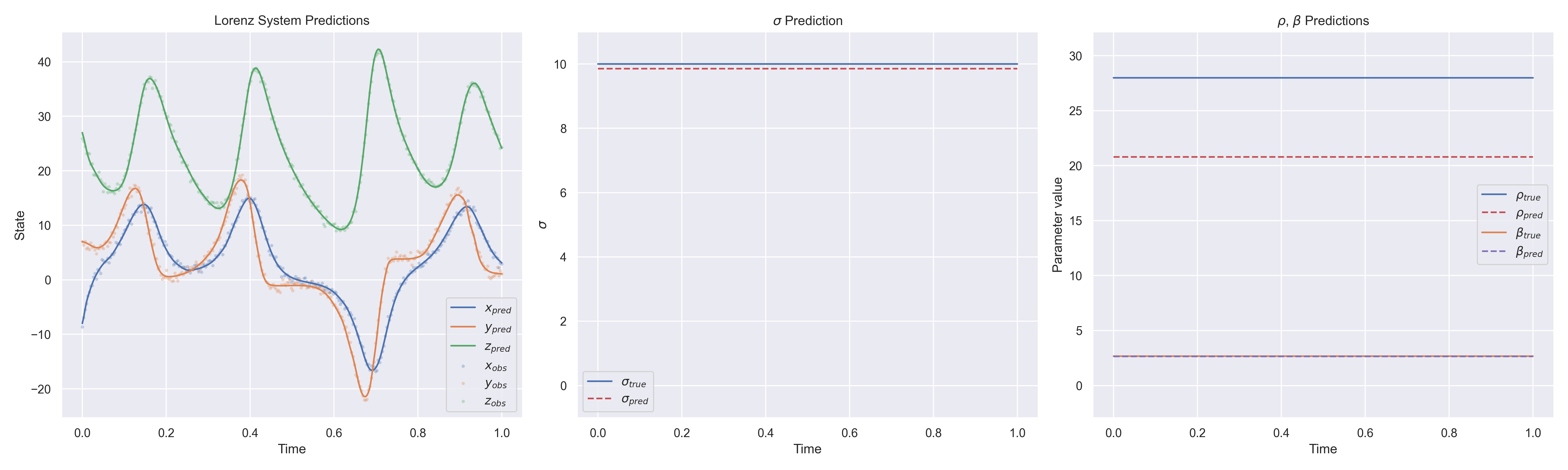

Drop-in ODE functions and DataModules for specific systems. See anypinn/catalog/ for the full list.

See CONTRIBUTING.md for setup instructions, code style guidelines, and the pull request workflow.