-

+

@@ -10,15 +10,18 @@

+

+

+

+  +

+

+

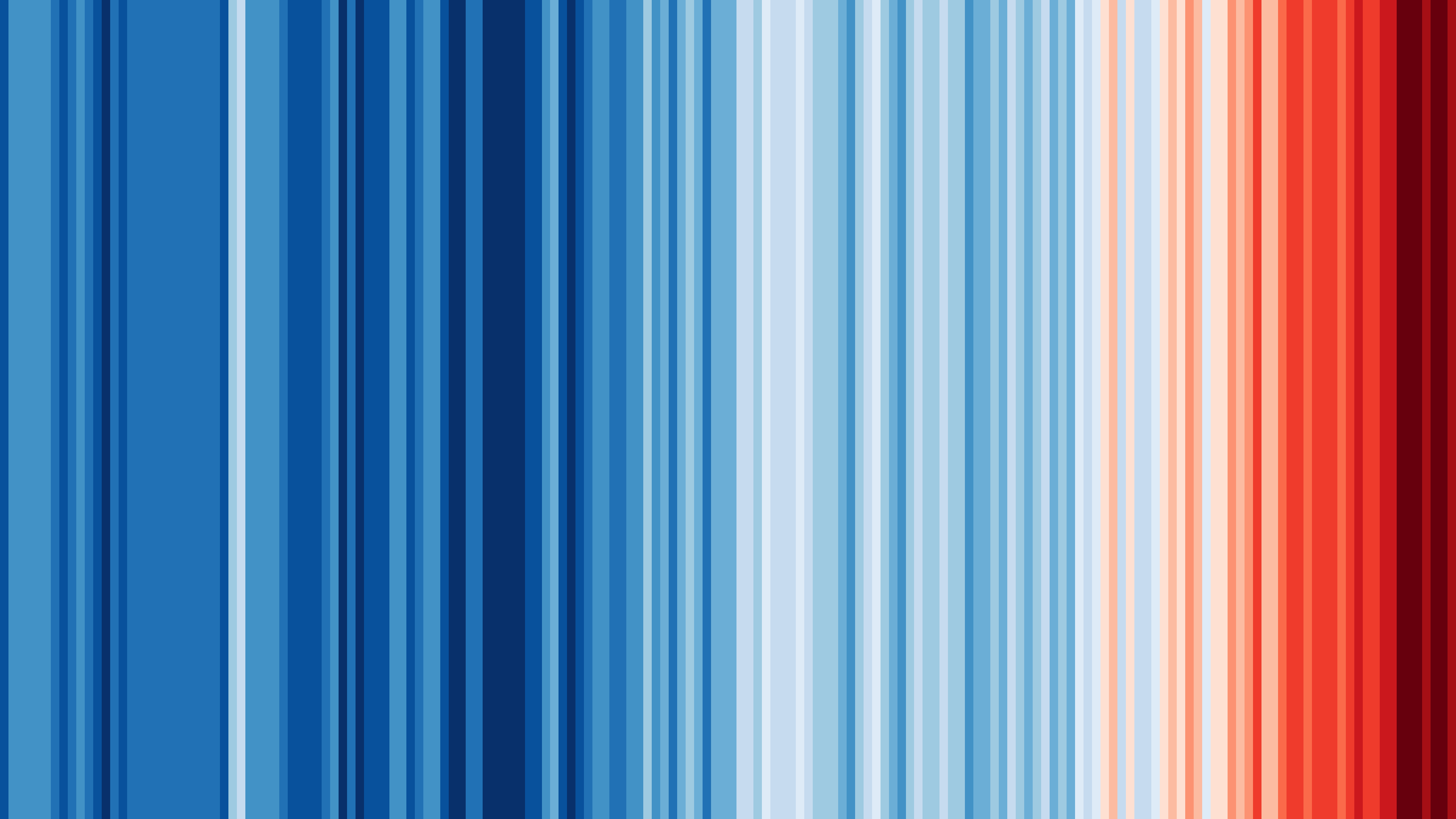

+**Graphics and Lead Scientist**: [Ed Hawkins](http://www.met.reading.ac.uk/~ed/home/index.php), National Centre for Atmospheric Science, University of Reading.

+

+**Data**: Berkeley Earth, NOAA, UK Met Office, MeteoSwiss, DWD, SMHI, UoR, Meteo France & ZAMG.

+

+

+#ShowYourStripes is distributed under a

+Creative Commons Attribution 4.0 International License

+

+  +

+

Built using Python {{ python_version }}.

diff --git a/docs/src/_templates/custom_sidebar_logo_version.html b/docs/src/_templates/custom_sidebar_logo_version.html new file mode 100644 index 0000000000..c9d9ac6e2e --- /dev/null +++ b/docs/src/_templates/custom_sidebar_logo_version.html @@ -0,0 +1,26 @@ +{% if on_rtd %} + {% if rtd_version == 'latest' %} + +-{{ version }}-gold?style=flat) +

+ {% elif rtd_version == 'stable' %}

+

+

+

+ {% elif rtd_version == 'stable' %}

+

+ -{{ version }}-green?style=flat) +

+ {% elif rtd_version_type == 'tag' %}

+ {# Covers builds for specific tags, including RC's. #}

+

+

+

+ {% elif rtd_version_type == 'tag' %}

+ {# Covers builds for specific tags, including RC's. #}

+

+ -{{ version }}-red?style=?style=flat) +

+ {% else %}

+ {# Anything else build by RTD will be the HEAD of an activated branch #}

+

+

+

+ {% else %}

+ {# Anything else build by RTD will be the HEAD of an activated branch #}

+

+  +

+ {% endif %}

+{%- else %}

+ {# not on rtd #}

+

+

+

+ {% endif %}

+{%- else %}

+ {# not on rtd #}

+

+  +

+{%- endif %}

diff --git a/docs/src/_templates/footer.html b/docs/src/_templates/footer.html

deleted file mode 100644

index 1d5fb08b78..0000000000

--- a/docs/src/_templates/footer.html

+++ /dev/null

@@ -1,5 +0,0 @@

-{% extends "!footer.html" %}

-{% block extrafooter %}

- Built using Python {{ python_version }}.

- {{ super() }}

-{% endblock %}

diff --git a/docs/src/_templates/layout.html b/docs/src/_templates/layout.html

index 96a2e0913e..974bd12753 100644

--- a/docs/src/_templates/layout.html

+++ b/docs/src/_templates/layout.html

@@ -1,47 +1,20 @@

-{% extends "!layout.html" %}

+{% extends "pydata_sphinx_theme/layout.html" %}

-{# This uses blocks. See:

+{# This uses blocks. See:

https://www.sphinx-doc.org/en/master/templating.html

#}

-/*---------------------------------------------------------------------------*/

-{%- block document %}

- {% if READTHEDOCS and rtd_version == 'latest' %}

-

+

+{%- endif %}

diff --git a/docs/src/_templates/footer.html b/docs/src/_templates/footer.html

deleted file mode 100644

index 1d5fb08b78..0000000000

--- a/docs/src/_templates/footer.html

+++ /dev/null

@@ -1,5 +0,0 @@

-{% extends "!footer.html" %}

-{% block extrafooter %}

- Built using Python {{ python_version }}.

- {{ super() }}

-{% endblock %}

diff --git a/docs/src/_templates/layout.html b/docs/src/_templates/layout.html

index 96a2e0913e..974bd12753 100644

--- a/docs/src/_templates/layout.html

+++ b/docs/src/_templates/layout.html

@@ -1,47 +1,20 @@

-{% extends "!layout.html" %}

+{% extends "pydata_sphinx_theme/layout.html" %}

-{# This uses blocks. See:

+{# This uses blocks. See:

https://www.sphinx-doc.org/en/master/templating.html

#}

-/*---------------------------------------------------------------------------*/

-{%- block document %}

- {% if READTHEDOCS and rtd_version == 'latest' %}

-

+ {%- block docs_body %}

+

+ {% if on_rtd and rtd_version == 'latest' %}

+

{%- endif %}

{{ super() }}

{%- endblock %}

-

-/*-----------------------------------------------------z----------------------*/

-

-{% block menu %}

- {{ super() }}

-

- {# menu_links and menu_links_name are set in conf.py (html_context) #}

-

- {% if menu_links %}

- `

+* `Twitter `_

+

+Interoperability

+----------------

+

+There's a big choice of Python tools out there! Each one has strengths and

+weaknesses in different areas, so we don't want to force a single choice for your

+whole workflow - we'd much rather make it easy for you to choose the right tool

+for the moment, switching whenever you need. Below are our ongoing efforts at

+smoother interoperability:

+

+.. not using toctree due to combination of child pages and cross-references.

+

+* The :mod:`iris.pandas` module

+* :doc:`iris_xarray`

+

+.. toctree::

+ :maxdepth: 1

+ :hidden:

+

+ iris_xarray

+

+Plugins

+-------

+

+Iris can be extended with **plugins**! See below for further information:

+

+.. toctree::

+ :maxdepth: 2

+

+ plugins

diff --git a/docs/src/community/iris_xarray.rst b/docs/src/community/iris_xarray.rst

new file mode 100644

index 0000000000..859597da78

--- /dev/null

+++ b/docs/src/community/iris_xarray.rst

@@ -0,0 +1,154 @@

+.. include:: ../common_links.inc

+

+======================

+Iris ❤️ :term:`Xarray`

+======================

+

+There is a lot of overlap between Iris and :term:`Xarray`, but some important

+differences too. Below is a summary of the most important differences, so that

+you can be prepared, and to help you choose the best package for your use case.

+

+Overall Experience

+------------------

+

+Iris is the more specialised package, focussed on making it as easy

+as possible to work with meteorological and climatological data. Iris

+is built to natively handle many key concepts, such as the CF conventions,

+coordinate systems and bounded coordinates. Iris offers a smaller toolkit of

+operations compared to Xarray, particularly around API for sophisticated

+computation such as array manipulation and multi-processing.

+

+Xarray's more generic data model and community-driven development give it a

+richer range of operations and broader possible uses. Using Xarray

+specifically for meteorology/climatology may require deeper knowledge

+compared to using Iris, and you may prefer to add Xarray plugins

+such as :ref:`cfxarray` to get the best experience. Advanced users can likely

+achieve better performance with Xarray than with Iris.

+

+Conversion

+----------

+There are multiple ways to convert between Iris and Xarray objects.

+

+* Xarray includes the :meth:`~xarray.DataArray.to_iris` and

+ :meth:`~xarray.DataArray.from_iris` methods - detailed in the

+ `Xarray IO notes on Iris`_. Since Iris evolves independently of Xarray, be

+ vigilant for concepts that may be lost during the conversion.

+* Because both packages are closely linked to the :term:`NetCDF Format`, it is

+ feasible to save a NetCDF file using one package then load that file using

+ the other package. This will be lossy in places, as both Iris and Xarray

+ are opinionated on how certain NetCDF concepts relate to their data models.

+* The Iris development team are exploring an improved 'bridge' between the two

+ packages. Follow the conversation on GitHub: `iris#4994`_. This project is

+ expressly intended to be as lossless as possible.

+

+Regridding

+----------

+Iris and Xarray offer a range of regridding methods - both natively and via

+additional packages such as `iris-esmf-regrid`_ and `xESMF`_ - which overlap

+in places

+but tend to cover a different set of use cases (e.g. Iris handles unstructured

+meshes but offers access to fewer ESMF methods). The behaviour of these

+regridders also differs slightly (even between different regridders attached to

+the same package) so the appropriate package to use depends highly on the

+particulars of the use case.

+

+Plotting

+--------

+Xarray and Iris have a large overlap of functionality when creating

+:term:`Matplotlib` plots and both support the plotting of multidimensional

+coordinates. This means the experience is largely similar using either package.

+

+Xarray supports further plotting backends through external packages (e.g. Bokeh through `hvPlot`_)

+and, if a user is already familiar with `pandas`_, the interface should be

+familiar. It also supports some different plot types to Iris, and therefore can

+be used for a wider variety of plots. It also has benefits regarding "out of

+the box", quick customisations to plots. However, if further customisation is

+required, knowledge of matplotlib is still required.

+

+In both cases, :term:`Cartopy` is/can be used. Iris does more work

+automatically for the user here, creating Cartopy

+:class:`~cartopy.mpl.geoaxes.GeoAxes` for latitude and longitude coordinates,

+whereas the user has to do this manually in Xarray.

+

+Statistics

+----------

+Both libraries are quite comparable with generally similar capabilities,

+performance and laziness. Iris offers more specificity in some cases, such as

+some more specific unique functions and masked tolerance in most statistics.

+Xarray seems more approachable however, with some less unique but more

+convenient solutions (these tend to be wrappers to :term:`Dask` functions).

+

+Laziness and Multi-Processing with :term:`Dask`

+-----------------------------------------------

+Iris and Xarray both support lazy data and out-of-core processing through

+utilisation of Dask.

+

+While both Iris and Xarray expose :term:`NumPy` conveniences at the API level

+(e.g. the `ndim()` method), only Xarray exposes Dask conveniences. For example

+:attr:`xarray.DataArray.chunks`, which gives the user direct control

+over the underlying Dask array chunks. The Iris API instead takes control of

+such concepts and user control is only possible by manipulating the underlying

+Dask array directly (accessed via :meth:`iris.cube.Cube.core_data`).

+

+:class:`xarray.DataArray`\ s comply with `NEP-18`_, allowing NumPy arrays to be

+based on them, and they also include the necessary extra members for Dask

+arrays to be based on them too. Neither of these is currently possible with

+Iris :class:`~iris.cube.Cube`\ s, although an ambition for the future.

+

+NetCDF File Control

+-------------------

+(More info: :term:`NetCDF Format`)

+

+Unlike Iris, Xarray generally provides full control of major file structures,

+i.e. dimensions + variables, including their order in the file. It mostly

+respects these in a file input, and can reproduce them on output.

+However, attribute handling is not so complete: like Iris, it interprets and

+modifies some recognised aspects, and can add some extra attributes not in the

+input.

+

+.. todo:

+ More detail on dates and fill values (@pp-mo suggestion).

+

+Handling of dates and fill values have some special problems here.

+

+Ultimately, nearly everything wanted in a particular desired result file can

+be achieved in Xarray, via provided override mechanisms (`loading keywords`_

+and the '`encoding`_' dictionaries).

+

+Missing Data

+------------

+Xarray uses :data:`numpy.nan` to represent missing values and this will support

+many simple use cases assuming the data are floats. Iris enables more

+sophisticated missing data handling by representing missing values as masks

+(:class:`numpy.ma.MaskedArray` for real data and :class:`dask.array.Array`

+for lazy data) which allows data to be any data type and to include either/both

+a mask and :data:`~numpy.nan`\ s.

+

+.. _cfxarray:

+

+`cf-xarray`_

+-------------

+Iris has a data model entirely based on :term:`CF Conventions`. Xarray has a

+data model based on :term:`NetCDF Format` with cf-xarray acting as translation

+into CF. Xarray/cf-xarray methods can be

+called and data accessed with CF like arguments (e.g. axis, standard name) and

+there are some CF specific utilities (similar

+to Iris utilities). Iris tends to cover more of and be stricter about CF.

+

+

+.. seealso::

+

+ * `Xarray IO notes on Iris`_

+ * `Xarray notes on other NetCDF libraries`_

+

+.. _Xarray IO notes on Iris: https://docs.xarray.dev/en/stable/user-guide/io.html#iris

+.. _Xarray notes on other NetCDF libraries: https://docs.xarray.dev/en/stable/getting-started-guide/faq.html#what-other-netcdf-related-python-libraries-should-i-know-about

+.. _loading keywords: https://docs.xarray.dev/en/stable/generated/xarray.open_dataset.html#xarray.open_dataset

+.. _encoding: https://docs.xarray.dev/en/stable/user-guide/io.html#writing-encoded-data

+.. _xESMF: https://github.com/pangeo-data/xESMF/

+.. _seaborn: https://seaborn.pydata.org/

+.. _hvPlot: https://hvplot.holoviz.org/

+.. _pandas: https://pandas.pydata.org/

+.. _NEP-18: https://numpy.org/neps/nep-0018-array-function-protocol.html

+.. _cf-xarray: https://github.com/xarray-contrib/cf-xarray

+.. _iris#4994: https://github.com/SciTools/iris/issues/4994

diff --git a/docs/src/community/plugins.rst b/docs/src/community/plugins.rst

new file mode 100644

index 0000000000..0d79d64623

--- /dev/null

+++ b/docs/src/community/plugins.rst

@@ -0,0 +1,68 @@

+.. _namespace package: https://packaging.python.org/en/latest/guides/packaging-namespace-packages/

+

+.. _community_plugins:

+

+Plugins

+=======

+

+Iris supports **plugins** under the ``iris.plugins`` `namespace package`_.

+This allows packages that extend Iris' functionality to be developed and

+maintained independently, while still being installed into ``iris.plugins``

+instead of a separate package. For example, a plugin may provide loaders or

+savers for additional file formats, or alternative visualisation methods.

+

+

+Using plugins

+-------------

+

+Once a plugin is installed, it can be used either via the

+:func:`iris.use_plugin` function, or by importing it directly:

+

+.. code-block:: python

+

+ import iris

+

+ iris.use_plugin("my_plugin")

+ # OR

+ import iris.plugins.my_plugin

+

+

+Creating plugins

+----------------

+

+The choice of a `namespace package`_ makes writing a plugin relatively

+straightforward: it simply needs to appear as a folder within ``iris/plugins``,

+then can be distributed in the same way as any other package. An example

+repository layout:

+

+.. code-block:: text

+

+ + lib

+ + iris

+ + plugins

+ + my_plugin

+ - __init__.py

+ - (more code...)

+ - README.md

+ - pyproject.toml

+ - setup.cfg

+ - (other project files...)

+

+In particular, note that there must **not** be any ``__init__.py`` files at

+higher levels than the plugin itself.

+

+The package name - how it is referred to by PyPI/conda, specified by

+``metadata.name`` in ``setup.cfg`` - is recommended to include both "iris" and

+the plugin name. Continuing this example, its ``setup.cfg`` should include, at

+minimum:

+

+.. code-block:: ini

+

+ [metadata]

+ name = iris-my-plugin

+

+ [options]

+ packages = find_namespace:

+

+ [options.packages.find]

+ where = lib

diff --git a/docs/src/conf.py b/docs/src/conf.py

index 19f22e808f..576a099b90 100644

--- a/docs/src/conf.py

+++ b/docs/src/conf.py

@@ -20,15 +20,16 @@

# ----------------------------------------------------------------------------

import datetime

+from importlib.metadata import version as get_version

import ntpath

import os

from pathlib import Path

import re

+from subprocess import run

import sys

+from urllib.parse import quote

import warnings

-import iris

-

# function to write useful output to stdout, prefixing the source.

def autolog(message):

@@ -41,20 +42,33 @@ def autolog(message):

# -- Are we running on the readthedocs server, if so do some setup -----------

on_rtd = os.environ.get("READTHEDOCS") == "True"

+# This is the rtd reference to the version, such as: latest, stable, v3.0.1 etc

+rtd_version = os.environ.get("READTHEDOCS_VERSION")

+if rtd_version is not None:

+ # Make rtd_version safe for use in shields.io badges.

+ rtd_version = rtd_version.replace("_", "__")

+ rtd_version = rtd_version.replace("-", "--")

+ rtd_version = quote(rtd_version)

+

+# branch, tag, external (for pull request builds), or unknown.

+rtd_version_type = os.environ.get("READTHEDOCS_VERSION_TYPE")

+

+# For local testing purposes we can force being on RTD and the version

+# on_rtd = True # useful for testing

+# rtd_version = "latest" # useful for testing

+# rtd_version = "stable" # useful for testing

+# rtd_version_type = "tag" # useful for testing

+# rtd_version = "my_branch" # useful for testing

+

if on_rtd:

autolog("Build running on READTHEDOCS server")

# list all the READTHEDOCS environment variables that may be of use

- # at some point

autolog("Listing all environment variables on the READTHEDOCS server...")

for item, value in os.environ.items():

autolog("[READTHEDOCS] {} = {}".format(item, value))

-# This is the rtd reference to the version, such as: latest, stable, v3.0.1 etc

-# For local testing purposes this could be explicitly set latest or stable.

-rtd_version = os.environ.get("READTHEDOCS_VERSION")

-

# -- Path setup --------------------------------------------------------------

# If extensions (or modules to document with autodoc) are in another directory,

@@ -82,20 +96,11 @@ def autolog(message):

author = "Iris Developers"

# The version info for the project you're documenting, acts as replacement for

-# |version| and |release|, also used in various other places throughout the

-# built documents.

-

-# The short X.Y version.

-if iris.__version__ == "dev":

- version = "dev"

-else:

- # major.minor.patch-dev -> major.minor.patch

- version = ".".join(iris.__version__.split("-")[0].split(".")[:3])

-# The full version, including alpha/beta/rc tags.

-release = iris.__version__

-

-autolog("Iris Version = {}".format(version))

-autolog("Iris Release = {}".format(release))

+# |version|, also used in various other places throughout the built documents.

+version = get_version("scitools-iris")

+release = version

+autolog(f"Iris Version = {version}")

+autolog(f"Iris Release = {release}")

# -- General configuration ---------------------------------------------------

@@ -153,12 +158,9 @@ def _dotv(version):

"sphinx_copybutton",

"sphinx.ext.napoleon",

"sphinx_panels",

- # TODO: Spelling extension disabled until the dependencies can be included

- # "sphinxcontrib.spelling",

"sphinx_gallery.gen_gallery",

"matplotlib.sphinxext.mathmpl",

"matplotlib.sphinxext.plot_directive",

- "image_test_output",

]

if skip_api == "1":

@@ -171,6 +173,7 @@ def _dotv(version):

# -- panels extension ---------------------------------------------------------

# See https://sphinx-panels.readthedocs.io/en/latest/

+panels_add_bootstrap_css = False

# -- Napoleon extension -------------------------------------------------------

# See https://sphinxcontrib-napoleon.readthedocs.io/en/latest/sphinxcontrib.napoleon.html

@@ -188,16 +191,6 @@ def _dotv(version):

napoleon_use_keyword = True

napoleon_custom_sections = None

-# -- spellingextension --------------------------------------------------------

-# See https://sphinxcontrib-spelling.readthedocs.io/en/latest/customize.html

-spelling_lang = "en_GB"

-# The lines in this file must only use line feeds (no carriage returns).

-spelling_word_list_filename = ["spelling_allow.txt"]

-spelling_show_suggestions = False

-spelling_show_whole_line = False

-spelling_ignore_importable_modules = True

-spelling_ignore_python_builtins = True

-

# -- copybutton extension -----------------------------------------------------

# See https://sphinx-copybutton.readthedocs.io/en/latest/

copybutton_prompt_text = r">>> |\.\.\. "

@@ -229,6 +222,8 @@ def _dotv(version):

"numpy": ("https://numpy.org/doc/stable/", None),

"python": ("https://docs.python.org/3/", None),

"scipy": ("https://docs.scipy.org/doc/scipy/", None),

+ "pandas": ("https://pandas.pydata.org/docs/", None),

+ "dask": ("https://docs.dask.org/en/stable/", None),

}

# The name of the Pygments (syntax highlighting) style to use.

@@ -246,6 +241,10 @@ def _dotv(version):

extlinks = {

"issue": ("https://github.com/SciTools/iris/issues/%s", "Issue #"),

"pull": ("https://github.com/SciTools/iris/pull/%s", "PR #"),

+ "discussion": (

+ "https://github.com/SciTools/iris/discussions/%s",

+ "Discussion #",

+ ),

}

# -- Doctest ("make doctest")--------------------------------------------------

@@ -257,43 +256,74 @@ def _dotv(version):

# The theme to use for HTML and HTML Help pages. See the documentation for

# a list of builtin themes.

#

-html_logo = "_static/iris-logo-title.png"

-html_favicon = "_static/favicon.ico"

-html_theme = "sphinx_rtd_theme"

+html_logo = "_static/iris-logo-title.svg"

+html_favicon = "_static/iris-logo.svg"

+html_theme = "pydata_sphinx_theme"

+

+# See https://pydata-sphinx-theme.readthedocs.io/en/latest/user_guide/configuring.html#configure-the-search-bar-position

+html_sidebars = {

+ "**": [

+ "custom_sidebar_logo_version",

+ "search-field",

+ "sidebar-nav-bs",

+ "sidebar-ethical-ads",

+ ]

+}

+# See https://pydata-sphinx-theme.readthedocs.io/en/latest/user_guide/configuring.html

html_theme_options = {

- "display_version": True,

- "style_external_links": True,

- "logo_only": "True",

+ "footer_items": ["copyright", "sphinx-version", "custom_footer"],

+ "collapse_navigation": True,

+ "navigation_depth": 3,

+ "show_prev_next": True,

+ "navbar_align": "content",

+ "github_url": "https://github.com/SciTools/iris",

+ "twitter_url": "https://twitter.com/scitools_iris",

+ # icons available: https://fontawesome.com/v5.15/icons?d=gallery&m=free

+ "icon_links": [

+ {

+ "name": "GitHub Discussions",

+ "url": "https://github.com/SciTools/iris/discussions",

+ "icon": "far fa-comments",

+ },

+ {

+ "name": "PyPI",

+ "url": "https://pypi.org/project/scitools-iris/",

+ "icon": "fas fa-box",

+ },

+ {

+ "name": "Conda",

+ "url": "https://anaconda.org/conda-forge/iris",

+ "icon": "fas fa-boxes",

+ },

+ ],

+ "use_edit_page_button": True,

+ "show_toc_level": 1,

+ # Omitted `theme-switcher` below to disable it

+ # Info: https://pydata-sphinx-theme.readthedocs.io/en/stable/user_guide/light-dark.html#configure-default-theme-mode

+ "navbar_end": ["navbar-icon-links"],

}

+rev_parse = run(["git", "rev-parse", "--short", "HEAD"], capture_output=True)

+commit_sha = rev_parse.stdout.decode().strip()

+

html_context = {

+ # pydata_theme

+ "github_repo": "iris",

+ "github_user": "scitools",

+ "github_version": "main",

+ "doc_path": "docs/src",

+ # default theme. Also disabled the button in the html_theme_options.

+ # Info: https://pydata-sphinx-theme.readthedocs.io/en/stable/user_guide/light-dark.html#configure-default-theme-mode

+ "default_mode": "light",

+ # custom

+ "on_rtd": on_rtd,

"rtd_version": rtd_version,

+ "rtd_version_type": rtd_version_type,

"version": version,

"copyright_years": copyright_years,

"python_version": build_python_version,

- # menu_links and menu_links_name are used in _templates/layout.html

- # to include some nice icons. See http://fontawesome.io for a list of

- # icons (used in the sphinx_rtd_theme)

- "menu_links_name": "Support",

- "menu_links": [

- (

- ' Source Code',

- "https://github.com/SciTools/iris",

- ),

- (

- ' GitHub Discussions',

- "https://github.com/SciTools/iris/discussions",

- ),

- (

- ' StackOverflow for "How Do I?"',

- "https://stackoverflow.com/questions/tagged/python-iris",

- ),

- (

- ' Legacy Documentation',

- "https://scitools.org.uk/iris/docs/v2.4.0/index.html",

- ),

- ],

+ "commit_sha": commit_sha,

}

# Add any paths that contain custom static files (such as style sheets) here,

@@ -302,12 +332,24 @@ def _dotv(version):

html_static_path = ["_static"]

html_style = "theme_override.css"

+# this allows for using datatables: https://datatables.net/

+html_css_files = [

+ "https://cdn.datatables.net/1.10.23/css/jquery.dataTables.min.css",

+]

+

+html_js_files = [

+ "https://cdn.datatables.net/1.10.23/js/jquery.dataTables.min.js",

+]

+

# url link checker. Some links work but report as broken, lets ignore them.

# See https://www.sphinx-doc.org/en/1.2/config.html#options-for-the-linkcheck-builder

linkcheck_ignore = [

+ "http://catalogue.ceda.ac.uk/uuid/82adec1f896af6169112d09cc1174499",

"http://cfconventions.org",

"http://code.google.com/p/msysgit/downloads/list",

"http://effbot.org",

+ "https://help.github.com",

+ "https://docs.github.com",

"https://github.com",

"http://www.personal.psu.edu/cab38/ColorBrewer/ColorBrewer_updates.html",

"http://schacon.github.com/git",

@@ -316,6 +358,7 @@ def _dotv(version):

"https://software.ac.uk/how-cite-software",

"http://www.esrl.noaa.gov/psd/data/gridded/conventions/cdc_netcdf_standard.shtml",

"http://www.nationalarchives.gov.uk/doc/open-government-licence",

+ "https://www.metoffice.gov.uk/",

]

# list of sources to exclude from the build.

@@ -335,6 +378,11 @@ def _dotv(version):

"ignore_pattern": r"__init__\.py",

# force gallery building, unless overridden (see src/Makefile)

"plot_gallery": "'True'",

+ # force re-registering of nc-time-axis with matplotlib for each example,

+ # required for sphinx-gallery>=0.11.0

+ "reset_modules": (

+ lambda gallery_conf, fname: sys.modules.pop("nc_time_axis", None),

+ ),

}

# -----------------------------------------------------------------------------

diff --git a/docs/src/developers_guide/assets/developer-settings-github-apps.png b/docs/src/developers_guide/assets/developer-settings-github-apps.png

new file mode 100644

index 0000000000..a63994d087

Binary files /dev/null and b/docs/src/developers_guide/assets/developer-settings-github-apps.png differ

diff --git a/docs/src/developers_guide/assets/download-pem.png b/docs/src/developers_guide/assets/download-pem.png

new file mode 100644

index 0000000000..cbceb1304d

Binary files /dev/null and b/docs/src/developers_guide/assets/download-pem.png differ

diff --git a/docs/src/developers_guide/assets/generate-key.png b/docs/src/developers_guide/assets/generate-key.png

new file mode 100644

index 0000000000..ac894dc71b

Binary files /dev/null and b/docs/src/developers_guide/assets/generate-key.png differ

diff --git a/docs/src/developers_guide/assets/gha-token-example.png b/docs/src/developers_guide/assets/gha-token-example.png

new file mode 100644

index 0000000000..cba1cf6935

Binary files /dev/null and b/docs/src/developers_guide/assets/gha-token-example.png differ

diff --git a/docs/src/developers_guide/assets/install-app.png b/docs/src/developers_guide/assets/install-app.png

new file mode 100644

index 0000000000..31259de588

Binary files /dev/null and b/docs/src/developers_guide/assets/install-app.png differ

diff --git a/docs/src/developers_guide/assets/install-iris-actions.png b/docs/src/developers_guide/assets/install-iris-actions.png

new file mode 100644

index 0000000000..db16dee55b

Binary files /dev/null and b/docs/src/developers_guide/assets/install-iris-actions.png differ

diff --git a/docs/src/developers_guide/assets/installed-app.png b/docs/src/developers_guide/assets/installed-app.png

new file mode 100644

index 0000000000..ab87032393

Binary files /dev/null and b/docs/src/developers_guide/assets/installed-app.png differ

diff --git a/docs/src/developers_guide/assets/iris-actions-secret.png b/docs/src/developers_guide/assets/iris-actions-secret.png

new file mode 100644

index 0000000000..f32456d0f2

Binary files /dev/null and b/docs/src/developers_guide/assets/iris-actions-secret.png differ

diff --git a/docs/src/developers_guide/assets/iris-github-apps.png b/docs/src/developers_guide/assets/iris-github-apps.png

new file mode 100644

index 0000000000..50753532b7

Binary files /dev/null and b/docs/src/developers_guide/assets/iris-github-apps.png differ

diff --git a/docs/src/developers_guide/assets/iris-secrets-created.png b/docs/src/developers_guide/assets/iris-secrets-created.png

new file mode 100644

index 0000000000..19b0ba11dc

Binary files /dev/null and b/docs/src/developers_guide/assets/iris-secrets-created.png differ

diff --git a/docs/src/developers_guide/assets/iris-security-actions.png b/docs/src/developers_guide/assets/iris-security-actions.png

new file mode 100644

index 0000000000..7cbe3a7dc2

Binary files /dev/null and b/docs/src/developers_guide/assets/iris-security-actions.png differ

diff --git a/docs/src/developers_guide/assets/iris-settings.png b/docs/src/developers_guide/assets/iris-settings.png

new file mode 100644

index 0000000000..70714235c2

Binary files /dev/null and b/docs/src/developers_guide/assets/iris-settings.png differ

diff --git a/docs/src/developers_guide/assets/org-perms-members.png b/docs/src/developers_guide/assets/org-perms-members.png

new file mode 100644

index 0000000000..99fd8985e2

Binary files /dev/null and b/docs/src/developers_guide/assets/org-perms-members.png differ

diff --git a/docs/src/developers_guide/assets/repo-perms-contents.png b/docs/src/developers_guide/assets/repo-perms-contents.png

new file mode 100644

index 0000000000..4c325c334d

Binary files /dev/null and b/docs/src/developers_guide/assets/repo-perms-contents.png differ

diff --git a/docs/src/developers_guide/assets/repo-perms-pull-requests.png b/docs/src/developers_guide/assets/repo-perms-pull-requests.png

new file mode 100644

index 0000000000..812f5ef951

Binary files /dev/null and b/docs/src/developers_guide/assets/repo-perms-pull-requests.png differ

diff --git a/docs/src/developers_guide/assets/scitools-settings.png b/docs/src/developers_guide/assets/scitools-settings.png

new file mode 100644

index 0000000000..8d7e728ab5

Binary files /dev/null and b/docs/src/developers_guide/assets/scitools-settings.png differ

diff --git a/docs/src/developers_guide/assets/user-perms.png b/docs/src/developers_guide/assets/user-perms.png

new file mode 100644

index 0000000000..607c7dcdb6

Binary files /dev/null and b/docs/src/developers_guide/assets/user-perms.png differ

diff --git a/docs/src/developers_guide/assets/webhook-active.png b/docs/src/developers_guide/assets/webhook-active.png

new file mode 100644

index 0000000000..538362f335

Binary files /dev/null and b/docs/src/developers_guide/assets/webhook-active.png differ

diff --git a/docs/src/developers_guide/asv_example_images/commits.png b/docs/src/developers_guide/asv_example_images/commits.png

new file mode 100644

index 0000000000..4e0d695322

Binary files /dev/null and b/docs/src/developers_guide/asv_example_images/commits.png differ

diff --git a/docs/src/developers_guide/asv_example_images/comparison.png b/docs/src/developers_guide/asv_example_images/comparison.png

new file mode 100644

index 0000000000..e146d30696

Binary files /dev/null and b/docs/src/developers_guide/asv_example_images/comparison.png differ

diff --git a/docs/src/developers_guide/asv_example_images/scalability.png b/docs/src/developers_guide/asv_example_images/scalability.png

new file mode 100644

index 0000000000..260c3ef536

Binary files /dev/null and b/docs/src/developers_guide/asv_example_images/scalability.png differ

diff --git a/docs/src/developers_guide/ci_checks.png b/docs/src/developers_guide/ci_checks.png

old mode 100755

new mode 100644

index e088e03a66..54ab672b3c

Binary files a/docs/src/developers_guide/ci_checks.png and b/docs/src/developers_guide/ci_checks.png differ

diff --git a/docs/src/developers_guide/contributing_benchmarks.rst b/docs/src/developers_guide/contributing_benchmarks.rst

new file mode 100644

index 0000000000..65bc9635b6

--- /dev/null

+++ b/docs/src/developers_guide/contributing_benchmarks.rst

@@ -0,0 +1,62 @@

+.. include:: ../common_links.inc

+

+.. _contributing.benchmarks:

+

+Benchmarking

+============

+Iris includes architecture for benchmarking performance and other metrics of

+interest. This is done using the `Airspeed Velocity`_ (ASV) package.

+

+Full detail on the setup and how to run or write benchmarks is in

+`benchmarks/README.md`_ in the Iris repository.

+

+Continuous Integration

+----------------------

+The primary purpose of `Airspeed Velocity`_, and Iris' specific benchmarking

+setup, is to monitor for performance changes using statistical comparison

+between commits, and this forms part of Iris' continuous integration.

+

+Accurately assessing performance takes longer than functionality pass/fail

+tests, so the benchmark suite is not automatically run against open pull

+requests, instead it is **run overnight against each the commits of the

+previous day** to check if any commit has introduced performance shifts.

+Detected shifts are reported in a new Iris GitHub issue.

+

+If a pull request author/reviewer suspects their changes may cause performance

+shifts, a convenience is available (currently via Nox) to replicate the

+overnight benchmark run but comparing the current ``HEAD`` with a requested

+branch (e.g. ``upstream/main``). Read more in `benchmarks/README.md`_.

+

+Other Uses

+----------

+Even when not statistically comparing commits, ASV's accurate execution time

+results - recorded using a sophisticated system of repeats - have other

+applications.

+

+* Absolute numbers can be interpreted providing they are recorded on a

+ dedicated resource.

+* Results for a series of commits can be visualised for an intuitive

+ understanding of when and why changes occurred.

+

+ .. image:: asv_example_images/commits.png

+ :width: 300

+

+* Parameterised benchmarks make it easy to visualise:

+

+ * Comparisons

+

+ .. image:: asv_example_images/comparison.png

+ :width: 300

+

+ * Scalability

+

+ .. image:: asv_example_images/scalability.png

+ :width: 300

+

+This also isn't limited to execution times. ASV can also measure memory demand,

+and even arbitrary numbers (e.g. file size, regridding accuracy), although

+without the repetition logic that execution timing has.

+

+

+.. _Airspeed Velocity: https://github.com/airspeed-velocity/asv

+.. _benchmarks/README.md: https://github.com/SciTools/iris/blob/main/benchmarks/README.md

diff --git a/docs/src/developers_guide/contributing_ci_tests.rst b/docs/src/developers_guide/contributing_ci_tests.rst

index 0257ff7cff..1d06434843 100644

--- a/docs/src/developers_guide/contributing_ci_tests.rst

+++ b/docs/src/developers_guide/contributing_ci_tests.rst

@@ -13,51 +13,50 @@ The `Iris`_ GitHub repository is configured to run checks against all its

branches automatically whenever a pull-request is created, updated or merged.

The checks performed are:

-* :ref:`testing_cirrus`

+* :ref:`testing_gha`

* :ref:`testing_cla`

* :ref:`pre_commit_ci`

-.. _testing_cirrus:

+.. _testing_gha:

-Cirrus-CI

-*********

+GitHub Actions

+**************

Iris unit and integration tests are an essential mechanism to ensure

that the Iris code base is working as expected. :ref:`developer_running_tests`

may be performed manually by a developer locally. However Iris is configured to

-use the `cirrus-ci`_ service for automated Continuous Integration (CI) testing.

+use `GitHub Actions`_ (GHA) for automated Continuous Integration (CI) testing.

-The `cirrus-ci`_ configuration file `.cirrus.yml`_ in the root of the Iris repository

-defines the tasks to be performed by `cirrus-ci`_. For further details

-refer to the `Cirrus-CI Documentation`_. The tasks performed during CI include:

+The Iris GHA YAML configuration files in the ``.github/workflows`` directory

+defines the CI tasks to be performed. For further details

+refer to the `GitHub Actions`_ documentation. The tasks performed during CI include:

-* linting the code base and ensuring it adheres to the `black`_ format

* running the system, integration and unit tests for Iris

* ensuring the documentation gallery builds successfully

* performing all doc-tests within the code base

* checking all URL references within the code base and documentation are valid

-The above `cirrus-ci`_ tasks are run automatically against all `Iris`_ branches

+The above GHA tasks are run automatically against all `Iris`_ branches

on GitHub whenever a pull-request is submitted, updated or merged. See the

-`Cirrus-CI Dashboard`_ for details of recent past and active Iris jobs.

+`Iris GitHub Actions`_ dashboard for details of recent past and active CI jobs.

-.. _cirrus_test_env:

+.. _gha_test_env:

-Cirrus CI Test environment

---------------------------

+GitHub Actions Test Environment

+-------------------------------

-The test environment on the Cirrus-CI service is determined from the requirement files

-in ``requirements/ci/py**.yml``. These are conda environment files that list the entire

-set of build, test and run requirements for Iris.

+The CI test environments for our GHA is determined from the requirement files

+in ``requirements/ci/pyXX.yml``. These are conda environment files list the top-level

+package dependencies for running and testing Iris.

For reproducible test results, these environments are resolved for all their dependencies

-and stored as lock files in ``requirements/ci/nox.lock``. The test environments will not

-resolve the dependencies each time, instead they will use the lock file to reproduce the

-same exact environment each time.

+and stored as conda lock files in the ``requirements/ci/nox.lock`` directory. The test environments

+will not resolve the dependencies each time, instead they will use the lock files to reproduce the

+exact same environment each time.

-**If you have updated the requirement yaml files with new dependencies, you will need to

+**If you have updated the requirement YAML files with new dependencies, you will need to

generate new lock files.** To do this, run the command::

python tools/update_lockfiles.py -o requirements/ci/nox.lock requirements/ci/py*.yml

@@ -68,49 +67,22 @@ or simply::

and add the changed lockfiles to your pull request.

+.. note::

+

+ If your installation of conda runs through Artifactory or another similar

+ proxy then you will need to amend that lockfile to use URLs that Github

+ Actions can access. A utility to strip out Artifactory exists in the

+ ``ssstack`` tool.

+

New lockfiles are generated automatically each week to ensure that Iris continues to be

tested against the latest available version of its dependencies.

Each week the yaml files in ``requirements/ci`` are resolved by a GitHub Action.

If the resolved environment has changed, a pull request is created with the new lock files.

-The CI test suite will run on this pull request and fixes for failed tests can be pushed to

-the ``auto-update-lockfiles`` branch to be included in the PR.

-Once a developer has pushed to this branch, the auto-update process will not run again until

-the PR is merged, to prevent overwriting developer commits.

-The auto-updater can still be invoked manually in this situation by going to the `GitHub Actions`_

-page for the workflow, and manually running using the "Run Workflow" button.

-By default, this will also not override developer commits. To force an update, you must

-confirm "yes" in the "Run Worflow" prompt.

-

-

-.. _skipping Cirrus-CI tasks:

-

-Skipping Cirrus-CI Tasks

-------------------------

-

-As a developer you may wish to not run all the CI tasks when you are actively

-developing e.g., you are writing documentation and there is no need for linting,

-or long running compute intensive testing tasks to be executed.

-

-As a convenience, it is possible to easily skip one or more tasks by setting

-the appropriate environment variable within the `.cirrus.yml`_ file to a

-**non-empty** string:

-

-* ``SKIP_LINT_TASK`` to skip `flake8`_ linting and `black`_ formatting

-* ``SKIP_TEST_MINIMAL_TASK`` to skip restricted unit and integration testing

-* ``SKIP_TEST_FULL_TASK`` to skip full unit and integration testing

-* ``SKIP_GALLERY_TASK`` to skip building the documentation gallery

-* ``SKIP_DOCTEST_TASK`` to skip running the documentation doc-tests

-* ``SKIP_LINKCHECK_TASK`` to skip checking for broken documentation URL references

-* ``SKIP_ALL_TEST_TASKS`` which is equivalent to setting ``SKIP_TEST_MINIMAL_TASK`` and ``SKIP_TEST_FULL_TASK``

-* ``SKIP_ALL_DOC_TASKS`` which is equivalent to setting ``SKIP_GALLERY_TASK``, ``SKIP_DOCTEST_TASK``, and ``SKIP_LINKCHECK_TASK``

-

-e.g., to skip the linting task, the following are all equivalent::

-

- SKIP_LINT_TASK: "1"

- SKIP_LINT_TASK: "true"

- SKIP_LINT_TASK: "false"

- SKIP_LINT_TASK: "skip"

- SKIP_LINT_TASK: "unicorn"

+The CI test suite will run on this pull request. If the tests fail, a developer

+will need to create a new branch based off the ``auto-update-lockfiles`` branch

+and add the required fixes to this new branch. If the fixes are made to the

+``auto-update-lockfiles`` branch these will be overwritten the next time the

+Github Action is run.

GitHub Checklist

@@ -146,9 +118,5 @@ pull-requests given the `Iris`_ GitHub repository `.pre-commit-config.yaml`_.

See the `pre-commit.ci dashboard`_ for details of recent past and active Iris jobs.

-

-.. _Cirrus-CI Dashboard: https://cirrus-ci.com/github/SciTools/iris

-.. _Cirrus-CI Documentation: https://cirrus-ci.org/guide/writing-tasks/

.. _.pre-commit-config.yaml: https://github.com/SciTools/iris/blob/main/.pre-commit-config.yaml

.. _pre-commit.ci dashboard: https://results.pre-commit.ci/repo/github/5312648

-.. _GitHub Actions: https://github.com/SciTools/iris/actions/workflows/refresh-lockfiles.yml

diff --git a/docs/src/developers_guide/contributing_codebase_index.rst b/docs/src/developers_guide/contributing_codebase_index.rst

index 88986c0c7a..b59a196ff0 100644

--- a/docs/src/developers_guide/contributing_codebase_index.rst

+++ b/docs/src/developers_guide/contributing_codebase_index.rst

@@ -1,7 +1,7 @@

.. _contributing.documentation.codebase:

-Contributing to the Code Base

-=============================

+Working with the Code Base

+==========================

.. toctree::

:maxdepth: 3

diff --git a/docs/src/developers_guide/contributing_deprecations.rst b/docs/src/developers_guide/contributing_deprecations.rst

index 1ecafdca9f..0b22e2cbd2 100644

--- a/docs/src/developers_guide/contributing_deprecations.rst

+++ b/docs/src/developers_guide/contributing_deprecations.rst

@@ -25,29 +25,29 @@ deprecation is accompanied by the introduction of a new public API.

Under these circumstances the following points apply:

- - Using the deprecated API must result in a concise deprecation warning which

- is an instance of :class:`iris.IrisDeprecation`.

- It is easiest to call

- :func:`iris._deprecation.warn_deprecated`, which is a

- simple wrapper to :func:`warnings.warn` with the signature

- `warn_deprecation(message, **kwargs)`.

- - Where possible, your deprecation warning should include advice on

- how to avoid using the deprecated API. For example, you might

- reference a preferred API, or more detailed documentation elsewhere.

- - You must update the docstring for the deprecated API to include a

- Sphinx deprecation directive:

-

- :literal:`.. deprecated:: `

-

- where you should replace `` with the major and minor version

- of Iris in which this API is first deprecated. For example: `1.8`.

-

- As with the deprecation warning, you should include advice on how to

- avoid using the deprecated API within the content of this directive.

- Feel free to include more detail in the updated docstring than in the

- deprecation warning.

- - You should check the documentation for references to the deprecated

- API and update them as appropriate.

+- Using the deprecated API must result in a concise deprecation warning which

+ is an instance of :class:`iris.IrisDeprecation`.

+ It is easiest to call

+ :func:`iris._deprecation.warn_deprecated`, which is a

+ simple wrapper to :func:`warnings.warn` with the signature

+ `warn_deprecation(message, **kwargs)`.

+- Where possible, your deprecation warning should include advice on

+ how to avoid using the deprecated API. For example, you might

+ reference a preferred API, or more detailed documentation elsewhere.

+- You must update the docstring for the deprecated API to include a

+ Sphinx deprecation directive:

+

+ :literal:`.. deprecated:: `

+

+ where you should replace `` with the major and minor version

+ of Iris in which this API is first deprecated. For example: `1.8`.

+

+ As with the deprecation warning, you should include advice on how to

+ avoid using the deprecated API within the content of this directive.

+ Feel free to include more detail in the updated docstring than in the

+ deprecation warning.

+- You should check the documentation for references to the deprecated

+ API and update them as appropriate.

Changing a Default

------------------

@@ -64,14 +64,14 @@ it causes the corresponding public API to use its new default behaviour.

The following points apply in addition to those for removing a public

API:

- - You should add a new boolean attribute to :data:`iris.FUTURE` (by

- modifying :class:`iris.Future`) that controls the default behaviour

- of the public API that needs updating. The initial state of the new

- boolean attribute should be `False`. You should name the new boolean

- attribute to indicate that setting it to `True` will select the new

- default behaviour.

- - You should include a reference to this :data:`iris.FUTURE` flag in your

- deprecation warning and corresponding Sphinx deprecation directive.

+- You should add a new boolean attribute to :data:`iris.FUTURE` (by

+ modifying :class:`iris.Future`) that controls the default behaviour

+ of the public API that needs updating. The initial state of the new

+ boolean attribute should be `False`. You should name the new boolean

+ attribute to indicate that setting it to `True` will select the new

+ default behaviour.

+- You should include a reference to this :data:`iris.FUTURE` flag in your

+ deprecation warning and corresponding Sphinx deprecation directive.

Removing a Deprecation

@@ -94,11 +94,11 @@ and/or example code should be removed/updated as appropriate.

Changing a Default

------------------

- - You should update the initial state of the relevant boolean attribute

- of :data:`iris.FUTURE` to `True`.

- - You should deprecate setting the relevant boolean attribute of

- :class:`iris.Future` in the same way as described in

- :ref:`removing-a-public-api`.

+- You should update the initial state of the relevant boolean attribute

+ of :data:`iris.FUTURE` to `True`.

+- You should deprecate setting the relevant boolean attribute of

+ :class:`iris.Future` in the same way as described in

+ :ref:`removing-a-public-api`.

.. rubric:: Footnotes

diff --git a/docs/src/developers_guide/contributing_documentation_full.rst b/docs/src/developers_guide/contributing_documentation_full.rst

index 77b898c0f3..a470def683 100755

--- a/docs/src/developers_guide/contributing_documentation_full.rst

+++ b/docs/src/developers_guide/contributing_documentation_full.rst

@@ -1,3 +1,4 @@

+.. include:: ../common_links.inc

.. _contributing.documentation_full:

@@ -31,7 +32,7 @@ The build can be run from the documentation directory ``docs/src``.

The build output for the html is found in the ``_build/html`` sub directory.

When updating the documentation ensure the html build has *no errors* or

-*warnings* otherwise it may fail the automated `cirrus-ci`_ build.

+*warnings* otherwise it may fail the automated `Iris GitHub Actions`_ build.

Once the build is complete, if it is rerun it will only rebuild the impacted

build artefacts so should take less time.

@@ -60,27 +61,36 @@ If you wish to run a full clean build you can run::

make clean

make html

-This is useful for a final test before committing your changes.

+This is useful for a final test before committing your changes. Having built

+the documentation, you can view them in your default browser via::

+

+ make show

.. note:: In order to preserve a clean build for the html, all **warnings**

have been promoted to be **errors** to ensure they are addressed.

This **only** applies when ``make html`` is run.

-.. _cirrus-ci: https://cirrus-ci.com/github/SciTools/iris

-

.. _contributing.documentation.testing:

Testing

~~~~~~~

-There are a ways to test various aspects of the documentation. The

-``make`` commands shown below can be run in the ``docs`` or

-``docs/src`` directory.

+There are various ways to test aspects of the documentation.

Each :ref:`contributing.documentation.gallery` entry has a corresponding test.

-To run the tests::

+To run all the gallery tests::

+

+ pytest -v docs/gallery_tests/test_gallery_examples.py

+

+To run a test for a single gallery example, use the ``pytest -k`` option for

+pattern matching, e.g.::

+

+ pytest -v -k plot_coriolis docs/gallery_tests/test_gallery_examples.py

+

+If a gallery test fails, follow the instructions in :ref:`testing.graphics`.

- make gallerytest

+The ``make`` commands shown below can be run in the ``docs`` or ``docs/src``

+directory.

Many documentation pages includes python code itself that can be run to ensure

it is still valid or to demonstrate examples. To ensure these tests pass

@@ -103,19 +113,7 @@ adding it to the ``linkcheck_ignore`` array that is defined in the

If this fails check the output for the text **broken** and then correct

or ignore the url.

-.. comment

- Finally, the spelling in the documentation can be checked automatically via the

- command::

-

- make spelling

-

- The spelling check may pull up many technical abbreviations and acronyms. This

- can be managed by using an **allow** list in the form of a file. This file,

- or list of files is set in the `conf.py`_ using the string list

- ``spelling_word_list_filename``.

-

-

-.. note:: In addition to the automated `cirrus-ci`_ build of all the

+.. note:: In addition to the automated `Iris GitHub Actions`_ build of all the

documentation build options above, the

https://readthedocs.org/ service is also used. The configuration

of this held in a file in the root of the

@@ -148,7 +146,7 @@ can exclude the module from the API documentation. Add the entry to the

Gallery

~~~~~~~

-The Iris :ref:`sphx_glr_generated_gallery` uses a sphinx extension named

+The Iris :ref:`gallery_index` uses a sphinx extension named

`sphinx-gallery `_

section of the `SciTools`_ ogranization web site.

-

.. _GitHub getting started: https://docs.github.com/en/github/getting-started-with-github

+

+

+.. toctree::

+ :maxdepth: 1

+ :caption: Developers Guide

+ :name: development_index

+ :hidden:

+

+ gitwash/index

+ contributing_documentation

+ contributing_codebase_index

+ contributing_changes

+ github_app

+ release

+

+

+.. toctree::

+ :maxdepth: 1

+ :caption: Reference

+ :hidden:

+

+ ../generated/api/iris

+ ../whatsnew/index

+ ../techpapers/index

+ ../copyright

+ ../voted_issues

diff --git a/docs/src/developers_guide/contributing_graphics_tests.rst b/docs/src/developers_guide/contributing_graphics_tests.rst

index 1268aa2686..7964c008c5 100644

--- a/docs/src/developers_guide/contributing_graphics_tests.rst

+++ b/docs/src/developers_guide/contributing_graphics_tests.rst

@@ -2,72 +2,17 @@

.. _testing.graphics:

-Graphics Tests

-**************

+Adding or Updating Graphics Tests

+=================================

-Iris may be used to create various forms of graphical output; to ensure

-the output is consistent, there are automated tests to check against

-known acceptable graphical output. See :ref:`developer_running_tests` for

-more information.

-

-At present graphical tests are used in the following areas of Iris:

-

-* Module ``iris.tests.test_plot``

-* Module ``iris.tests.test_quickplot``

-* :ref:`sphx_glr_generated_gallery` plots contained in

- ``docs/gallery_tests``.

-

-

-Challenges

-==========

-

-Iris uses many dependencies that provide functionality, an example that

-applies here is matplotlib_. For more information on the dependences, see

-:ref:`installing_iris`. When there are updates to the matplotlib_ or a

-dependency of matplotlib, this may result in a change in the rendered graphical

-output. This means that there may be no changes to Iris_, but due to an

-updated dependency any automated tests that compare a graphical output to a

-known acceptable output may fail. The failure may also not be visually

-perceived as it may be a simple pixel shift.

-

-

-Testing Strategy

-================

-

-The `Iris Cirrus-CI matrix`_ defines multiple test runs that use

-different versions of Python to ensure Iris is working as expected.

-

-To make this manageable, the ``iris.tests.IrisTest_nometa.check_graphic`` test

-routine tests against multiple alternative **acceptable** results. It does

-this using an image **hash** comparison technique which avoids storing

-reference images in the Iris repository itself.

-

-This consists of:

-

- * The ``iris.tests.IrisTest_nometa.check_graphic`` function uses a perceptual

- **image hash** of the outputs (see https://github.com/JohannesBuchner/imagehash)

- as the basis for checking test results.

-

- * The hashes of known **acceptable** results for each test are stored in a

- lookup dictionary, saved to the repo file

- ``lib/iris/tests/results/imagerepo.json``

- (`link `_) .

-

- * An actual reference image for each hash value is stored in a *separate*

- public repository https://github.com/SciTools/test-iris-imagehash.

-

- * The reference images allow human-eye assessment of whether a new output is

- judged to be close enough to the older ones, or not.

-

- * The utility script ``iris/tests/idiff.py`` automates checking, enabling the

- developer to easily compare proposed new **acceptable** result images

- against the existing accepted reference images, for each failing test.

+.. note::

-The acceptable images for each test can be viewed online. The :ref:`testing.imagehash_index` lists all the graphical tests in the test suite and

-shows the known acceptable result images for comparison.

+ If a large number of images tests are failing due to an update to the

+ libraries used for image hashing, follow the instructions on

+ :ref:`refresh-imagerepo`.

-Reviewing Failing Tests

-=======================

+Generating New Results

+----------------------

When you find that a graphics test in the Iris testing suite has failed,

following changes in Iris or the run dependencies, this is the process

@@ -76,14 +21,24 @@ you should follow:

#. Create a new, empty directory to store temporary image results, at the path

``lib/iris/tests/result_image_comparison`` in your Iris repository checkout.

-#. **In your Iris repo root directory**, run the relevant (failing) tests

- directly as python scripts, or by using a command such as::

+#. Run the relevant (failing) tests directly as python scripts, or using

+ ``pytest``.

+

+The results of the failing image tests will now be available in

+``lib/iris/tests/result_image_comparison``.

+

+.. note::

+

+ The ``result_image_comparison`` folder is covered by a project

+ ``.gitignore`` setting, so those files *will not show up* in a

+ ``git status`` check.

- python -m unittest discover paths/to/test/files

+Reviewing Failing Tests

+-----------------------

-#. In the ``iris/lib/iris/tests`` folder, run the command::

+#. Run ``iris/lib/iris/tests/graphics/idiff.py`` with python, e.g.:

- python idiff.py

+ python idiff.py

This will open a window for you to visually inspect

side-by-side **old**, **new** and **difference** images for each failed

@@ -92,29 +47,28 @@ you should follow:

If the change is **accepted**:

- * the imagehash value of the new result image is added into the relevant

- set of 'valid result hashes' in the image result database file,

- ``tests/results/imagerepo.json``

+ * the imagehash value of the new result image is added into the relevant

+ set of 'valid result hashes' in the image result database file,

+ ``tests/results/imagerepo.json``

- * the relevant output file in ``tests/result_image_comparison`` is

- renamed according to the image hash value, as ``.png``.

- A copy of this new PNG file must then be added into the reference image

- repository at https://github.com/SciTools/test-iris-imagehash

- (See below).

+ * the relevant output file in ``tests/result_image_comparison`` is renamed

+ according to the test name. A copy of this new PNG file must then be added

+ into the ``iris-test-data`` repository, at

+ https://github.com/SciTools/iris-test-data (See below).

If a change is **skipped**:

- * no further changes are made in the repo.

+ * no further changes are made in the repo.

- * when you run ``iris/tests/idiff.py`` again, the skipped choice will be

- presented again.

+ * when you run ``iris/tests/idiff.py`` again, the skipped choice will be

+ presented again.

If a change is **rejected**:

- * the output image is deleted from ``result_image_comparison``.

+ * the output image is deleted from ``result_image_comparison``.

- * when you run ``iris/tests/idiff.py`` again, the skipped choice will not

- appear, unless the relevant failing test is re-run.

+ * when you run ``iris/tests/idiff.py`` again, the skipped choice will not

+ appear, unless the relevant failing test is re-run.

#. **Now re-run the tests**. The **new** result should now be recognised and the

relevant test should pass. However, some tests can perform *multiple*

@@ -123,46 +77,66 @@ you should follow:

re-run may encounter further (new) graphical test failures. If that

happens, simply repeat the check-and-accept process until all tests pass.

+#. You're now ready to :ref:`add-graphics-test-changes`

-Add Your Changes to Iris

-========================

-To add your changes to Iris, you need to make two pull requests (PR).

+Adding a New Image Test

+-----------------------

-#. The first PR is made in the ``test-iris-imagehash`` repository, at

- https://github.com/SciTools/test-iris-imagehash.

+If you attempt to run ``idiff.py`` when there are new graphical tests for which

+no baseline yet exists, you will get a warning that ``idiff.py`` is ``Ignoring

+unregistered test result...``. In this case,

- * First, add all the newly-generated referenced PNG files into the

- ``images/v4`` directory. In your Iris repo, these files are to be found

- in the temporary results folder ``iris/tests/result_image_comparison``.

+#. rename the relevant images from ``iris/tests/result_image_comparison`` by

- * Then, to update the file which lists available images,

- ``v4_files_listing.txt``, run from the project root directory::

+ * removing the ``result-`` prefix

- python recreate_v4_files_listing.py

+ * fully qualifying the test name if it isn't already (i.e. it should start

+ ``iris.tests...``or ``gallery_tests...``)

- * Create a PR proposing these changes, in the usual way.

+#. run the tests in the mode that lets them create missing data (see

+ :ref:`create-missing`). This will update ``imagerepo.json`` with the new

+ test name and image hash.

-#. The second PR is created in the Iris_ repository, and

- should only include the change to the image results database,

- ``tests/results/imagerepo.json``.

- The description box of this pull request should contain a reference to

- the matching one in ``test-iris-imagehash``.

+#. and then add them to the Iris test data as covered in

+ :ref:`add-graphics-test-changes`.

-.. note::

- The ``result_image_comparison`` folder is covered by a project

- ``.gitignore`` setting, so those files *will not show up* in a

- ``git status`` check.

+.. _refresh-imagerepo:

-.. important::

+Refreshing the Stored Hashes

+----------------------------

- The Iris pull-request will not test successfully in Cirrus-CI until the

- ``test-iris-imagehash`` pull request has been merged. This is because there

- is an Iris_ test which ensures the existence of the reference images (uris)

- for all the targets in the image results database. It will also fail

- if you forgot to run ``recreate_v4_files_listing.py`` to update the

- image-listing file in ``test-iris-imagehash``.

+From time to time, a new version of the image hashing library will cause all

+image hashes to change. The image hashes stored in

+``tests/results/imagerepo.json`` can be refreshed using the baseline images

+stored in the ``iris-test-data`` repository (at

+https://github.com/SciTools/iris-test-data) using the script

+``tests/graphics/recreate_imagerepo.py``. Use the ``--help`` argument for the

+command line arguments.

-.. _Iris Cirrus-CI matrix: https://github.com/scitools/iris/blob/main/.cirrus.yml

+.. _add-graphics-test-changes:

+

+Add Your Changes to Iris

+------------------------

+

+To add your changes to Iris, you need to make two pull requests (PR).

+

+#. The first PR is made in the ``iris-test-data`` repository, at

+ https://github.com/SciTools/iris-test-data.

+

+ * Add all the newly-generated referenced PNG files into the

+ ``test_data/images`` directory. In your Iris repo, these files are to be found

+ in the temporary results folder ``iris/tests/result_image_comparison``.

+

+ * Create a PR proposing these changes, in the usual way.

+

+#. The second PR is the one that makes the changes you intend to the Iris_ repository.

+ The description box of this pull request should contain a reference to

+ the matching one in ``iris-test-data``.

+

+ * This PR should include updating the version of the test data in

+ ``.github/workflows/ci-tests.yml`` and

+ ``.github/workflows/ci-docs-tests.yml`` to the new version created by the

+ merging of your ``iris-test-data`` PR.

diff --git a/docs/src/developers_guide/contributing_pull_request_checklist.rst b/docs/src/developers_guide/contributing_pull_request_checklist.rst

index 5afb461d68..57bc9fd728 100644

--- a/docs/src/developers_guide/contributing_pull_request_checklist.rst

+++ b/docs/src/developers_guide/contributing_pull_request_checklist.rst

@@ -16,8 +16,8 @@ is merged. Before submitting a pull request please consider this list.

#. **Provide a helpful description** of the Pull Request. This should include:

- * The aim of the change / the problem addressed / a link to the issue.

- * How the change has been delivered.

+ * The aim of the change / the problem addressed / a link to the issue.

+ * How the change has been delivered.

#. **Include a "What's New" entry**, if appropriate.

See :ref:`whats_new_contributions`.

@@ -31,10 +31,11 @@ is merged. Before submitting a pull request please consider this list.

#. **Check all new dependencies added to the** `requirements/ci/`_ **yaml

files.** If dependencies have been added then new nox testing lockfiles

- should be generated too, see :ref:`cirrus_test_env`.

+ should be generated too, see :ref:`gha_test_env`.

#. **Check the source documentation been updated to explain all new or changed

- features**. See :ref:`docstrings`.

+ features**. Note, we now use numpydoc strings. Any touched code should

+ be updated to use the docstrings formatting. See :ref:`docstrings`.

#. **Include code examples inside the docstrings where appropriate**. See

:ref:`contributing.documentation.testing`.

@@ -42,8 +43,6 @@ is merged. Before submitting a pull request please consider this list.

#. **Check the documentation builds without warnings or errors**. See

:ref:`contributing.documentation.building`

-#. **Check for any new dependencies in the** `.cirrus.yml`_ **config file.**

-

#. **Check for any new dependencies in the** `readthedocs.yml`_ **file**. This

file is used to build the documentation that is served from

https://scitools-iris.readthedocs.io/en/latest/

@@ -51,12 +50,10 @@ is merged. Before submitting a pull request please consider this list.

#. **Check for updates needed for supporting projects for test or example

data**. For example:

- * `iris-test-data`_ is a github project containing all the data to support

- the tests.

- * `iris-sample-data`_ is a github project containing all the data to support

- the gallery and examples.

- * `test-iris-imagehash`_ is a github project containing reference plot

- images to support Iris :ref:`testing.graphics`.

+ * `iris-test-data`_ is a github project containing all the data to support

+ the tests.

+ * `iris-sample-data`_ is a github project containing all the data to support

+ the gallery and examples.

If new files are required by tests or code examples, they must be added to

the appropriate supporting project via a suitable pull-request. This pull

diff --git a/docs/src/developers_guide/contributing_running_tests.rst b/docs/src/developers_guide/contributing_running_tests.rst

index ab36172283..f60cedba05 100644

--- a/docs/src/developers_guide/contributing_running_tests.rst

+++ b/docs/src/developers_guide/contributing_running_tests.rst

@@ -5,13 +5,22 @@

Running the Tests

*****************

-Using setuptools for Testing Iris

-=================================

+There are two options for running the tests:

-.. warning:: The `setuptools`_ ``test`` command was deprecated in `v41.5.0`_. See :ref:`using nox`.

+* Use an environment you created yourself. This requires more manual steps to

+ set up, but gives you more flexibility. For example, you can run a subset of

+ the tests or use ``python`` interactively to investigate any issues. See

+ :ref:`test manual env`.

-A prerequisite of running the tests is to have the Python environment

-setup. For more information on this see :ref:`installing_from_source`.

+* Use ``nox``. This will automatically generate an environment and run test

+ sessions consistent with our GitHub continuous integration. See :ref:`using nox`.

+

+.. _test manual env:

+

+Testing Iris in a Manually Created Environment

+==============================================

+

+To create a suitable environment for running the tests, see :ref:`installing_from_source`.

Many Iris tests will use data that may be defined in the test itself, however

this is not always the case as sometimes example files may be used. Due to

@@ -32,81 +41,76 @@ The example command below uses ``~/projects`` as the parent directory::

git clone git@github.com:SciTools/iris-test-data.git

export OVERRIDE_TEST_DATA_REPOSITORY=~/projects/iris-test-data/test_data

-All the Iris tests may be run from the root ``iris`` project directory via::

+All the Iris tests may be run from the root ``iris`` project directory using

+``pytest``. For example::

- python setup.py test

-

-You can also run a specific test, the example below runs the tests for

-mapping::

+ pytest -n 2

- cd lib/iris/tests

- python test_mapping.py

+will run the tests across two processes. For more options, use the command

+``pytest -h``. Below is a trimmed example of the output::

-When running the test directly as above you can view the command line options

-using the commands ``python test_mapping.py -h`` or

-``python test_mapping.py --help``.

+ ============================= test session starts ==============================

+ platform linux -- Python 3.10.5, pytest-7.1.2, pluggy-1.0.0

+ rootdir: /path/to/git/clone/iris, configfile: pyproject.toml, testpaths: lib/iris

+ plugins: xdist-2.5.0, forked-1.4.0

+ gw0 I / gw1 I

+ gw0 [6361] / gw1 [6361]

-.. tip:: A useful command line option to use is ``-d``. This will display

- matplotlib_ figures as the tests are run. For example::

-

- python test_mapping.py -d

-

- You can also use the ``-d`` command line option when running all

- the tests but this will take a while to run and will require the

- manual closing of each of the figures for the tests to continue.

-

-The output from running the tests is verbose as it will run ~5000 separate

-tests. Below is a trimmed example of the output::

-

- running test

- Running test suite(s): default

-

- Running test discovery on iris.tests with 2 processors.

- test_circular_subset (iris.tests.experimental.regrid.test_regrid_area_weighted_rectilinear_src_and_grid.TestAreaWeightedRegrid) ... ok

- test_cross_section (iris.tests.experimental.regrid.test_regrid_area_weighted_rectilinear_src_and_grid.TestAreaWeightedRegrid) ... ok

- test_different_cs (iris.tests.experimental.regrid.test_regrid_area_weighted_rectilinear_src_and_grid.TestAreaWeightedRegrid) ... ok

- ...

+ ........................................................................ [ 1%]

+ ........................................................................ [ 2%]

+ ........................................................................ [ 3%]

...

- test_ellipsoid (iris.tests.unit.experimental.raster.test_export_geotiff.TestProjection) ... SKIP: Test requires 'gdal'.

- test_no_ellipsoid (iris.tests.unit.experimental.raster.test_export_geotiff.TestProjection) ... SKIP: Test requires 'gdal'.

+ .......................ssssssssssssssssss............................... [ 99%]

+ ........................ [100%]

+ =============================== warnings summary ===============================

...

+ -- Docs: https://docs.pytest.org/en/stable/how-to/capture-warnings.html

+ =========================== short test summary info ============================

+ SKIPPED [1] lib/iris/tests/experimental/test_raster.py:152: Test requires 'gdal'.

+ SKIPPED [1] lib/iris/tests/experimental/test_raster.py:155: Test requires 'gdal'.

...

- test_slice (iris.tests.test_util.TestAsCompatibleShape) ... ok

- test_slice_and_transpose (iris.tests.test_util.TestAsCompatibleShape) ... ok

- test_transpose (iris.tests.test_util.TestAsCompatibleShape) ... ok

-

- ----------------------------------------------------------------------

- Ran 4762 tests in 238.649s

-

- OK (SKIP=22)

+ ========= 6340 passed, 21 skipped, 1659 warnings in 193.57s (0:03:13) ==========

There may be some tests that have been **skipped**. This is due to a Python

decorator being present in the test script that will intentionally skip a test

if a certain condition is not met. In the example output above there are

-**22** skipped tests, at the point in time when this was run this was primarily

-due to an experimental dependency not being present.

-

+**21** skipped tests. At the point in time when this was run this was due to an

+experimental dependency not being present.

.. tip::

The most common reason for tests to be skipped is when the directory for the

``iris-test-data`` has not been set which would shows output such as::

- test_coord_coord_map (iris.tests.test_plot.Test1dScatter) ... SKIP: Test(s) require external data.

- test_coord_coord (iris.tests.test_plot.Test1dScatter) ... SKIP: Test(s) require external data.

- test_coord_cube (iris.tests.test_plot.Test1dScatter) ... SKIP: Test(s) require external data.

-

+ SKIPPED [1] lib/iris/tests/unit/fileformats/test_rules.py:157: Test(s) require external data.

+ SKIPPED [1] lib/iris/tests/unit/fileformats/pp/test__interpret_field.py:97: Test(s) require external data.

+ SKIPPED [1] lib/iris/tests/unit/util/test_demote_dim_coord_to_aux_coord.py:29: Test(s) require external data.

+

All Python decorators that skip tests will be defined in

``lib/iris/tests/__init__.py`` with a function name with a prefix of

``skip_``.

+You can also run a specific test module. The example below runs the tests for

+mapping::

+

+ cd lib/iris/tests

+ python test_mapping.py

+

+When running the test directly as above you can view the command line options

+using the commands ``python test_mapping.py -h`` or

+``python test_mapping.py --help``.

+

+.. tip:: A useful command line option to use is ``-d``. This will display

+ matplotlib_ figures as the tests are run. For example::

+

+ python test_mapping.py -d

.. _using nox:

Using Nox for Testing Iris

==========================

-Iris has adopted the use of the `nox`_ tool for automated testing on `cirrus-ci`_

+The `nox`_ tool has for adopted for automated testing on `Iris GitHub Actions`_

and also locally on the command-line for developers.

`nox`_ is similar to `tox`_, but instead leverages the expressiveness and power of a Python

@@ -124,15 +128,12 @@ automates the process of: